So if you want to upload to the positive Y axis, you would set the textarget parameter of the call to GL_TEXTURE_CUBE_MAP_POSITIVE_Y. This function selects which face to upload to via the same mechanism as glTexImage2D does: the GL_TEXTURE_CUBE_MAP_* face targets mentioned above. The older mechanism of uploading to faces of a cubemap uses glTexSubImage2D. The faces are indexed in the following order: The third dimension values ( zoffset) for glTexSubImage3D represents which face(s) to upload data to. If OpenGL 4.5 or ARB_direct_state_access is available, then glTexSubImage3D can be used to upload face data for a particular mipmap level. Uploading pixel data for cubemaps is somewhat complicated. Cubemaps can use any of the filtering modes and other texture parameters. These faces are:Ĭubemaps may have mipmaps, but each face must have the same number of mipmaps. The target parameter is not GL_TEXTURE_CUBE_MAP instead, it specifies which of the 6 faces of the cubemap is being allocated. Then call glTexImage2D 6 times, using the same size, mipmap level, and internalformat. To allocate mutable storage for the 6 faces of a cubemap's mipmap chain, bind the texture to GL_TEXTURE_CUBE_MAP. If OpenGL 4.3 or ARB_texture_storage is available, then immutable storage for a cubemap texture can be allocated with glTexStorage2D. The width and height of a cubemap must be the same (ie: cubemaps are squares), but these sizes need not be powers of two. However, each mipmap level has 6 faces, with each face having the same size as the other faces.

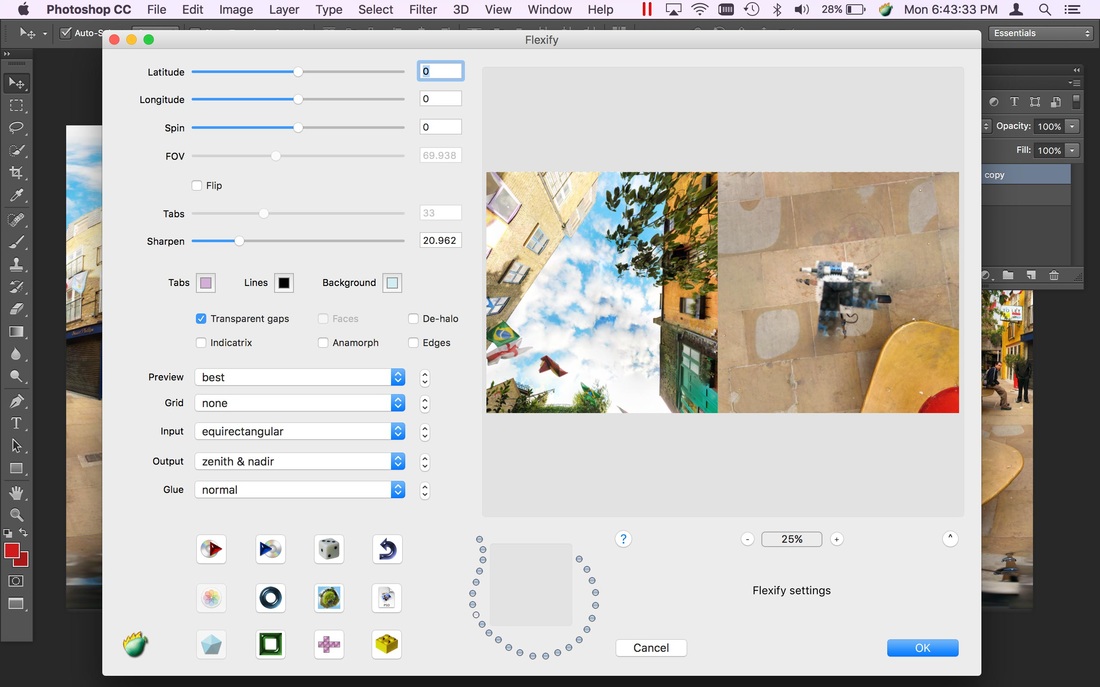

They are similar to 2D textures in that they have two dimensions. Let material = new THREE.Cubemaps are a texture type, using the type GL_TEXTURE_CUBE_MAP. Let textureCube = (urls, THREE.CubeRefractionMapping) The geometry we used is called a box geometry, and we are defining the length, width, and breadth of this box as 100 units each: We now load the six images defined previously into a “texture cube,” which is then used to define our material. Each scene is defined by a number of “meshes.” Each mesh is defined by a geometry, which specifies the shape of the mesh, and a material, which specifies the appearence and coloring of the mesh. This is the part where we actually “create” the cubemap from the images we have.You can make your own cubemap from normal images and some respectable photoshop skills, or you can take images from some of the examples that already exist. Normally, you would have to use Google for cubemap images, but those are not the best quality. Path + '_z_p' + format, path + '_z_n' + format Path + '_y_p' + format, path + '_y_n' + format, Path + '_x_p' + format, path + '_x_n' + format, In this case, the image for one side of our cube (the positive x side) would be located at the path ‘/cubemap/cm_x_p.jpg’: In the snippet below, we are just making an array of paths, which is where all our images are kept. Each cubemap is composed of six images.Let cameraCube = new THREE.PerspectiveCamera( 60, window.innerWidth / window.innerHeight, 1, 100000 ) For almost all cases, these numbers can be consdered as defaults and need not be changed. The arguments we see for the camera are parameters such as frustum vertical, aspect ratio, near frame, and far frame, which are much beyond the scope of this post.

Three.js provides constructors for both of these things. As mentioned before, each rendering will have a scene and a camera.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed